Imagine uploading a photo to social media and, within seconds, your friends are automatically tagged. Or unlocking your smartphone with just a glance. It feels like magic — but behind that seamless experience lies one of the most powerful and controversial technologies of our time: AI-powered face recognition.

From airport security systems to smartphone authentication, from law enforcement databases to personalized marketing tools, face recognition technology has quietly reshaped the way the digital world identifies us. But how exactly does artificial intelligence learn to recognize your face among billions? And what are the real security and privacy implications behind this innovation?

In this deep-dive guide, we’ll explore how face recognition models work, the science behind facial embeddings, the role of deep learning, and the serious security concerns surrounding biometric identification.

What Is Face Recognition in Artificial Intelligence?

Face recognition is a branch of computer vision and artificial intelligence that enables machines to identify or verify a person based on their facial features. Unlike simple face detection — which only identifies the presence of a face in an image — face recognition determines whose face it is.

At its core, modern face recognition relies heavily on deep learning, especially Convolutional Neural Networks (CNNs), to extract patterns from facial images. These systems are trained on millions (sometimes billions) of labeled face images to learn unique features such as eye spacing, jaw structure, cheekbone alignment, and micro-patterns in skin texture.

Many of the breakthroughs in face recognition stem from advanced neural network architectures like those introduced in research from Google AI and implementations available in frameworks such as TensorFlow and PyTorch. These tools allow developers to build highly accurate models that can identify individuals with remarkable precision.

Step 1: Face Detection – Finding the Face First

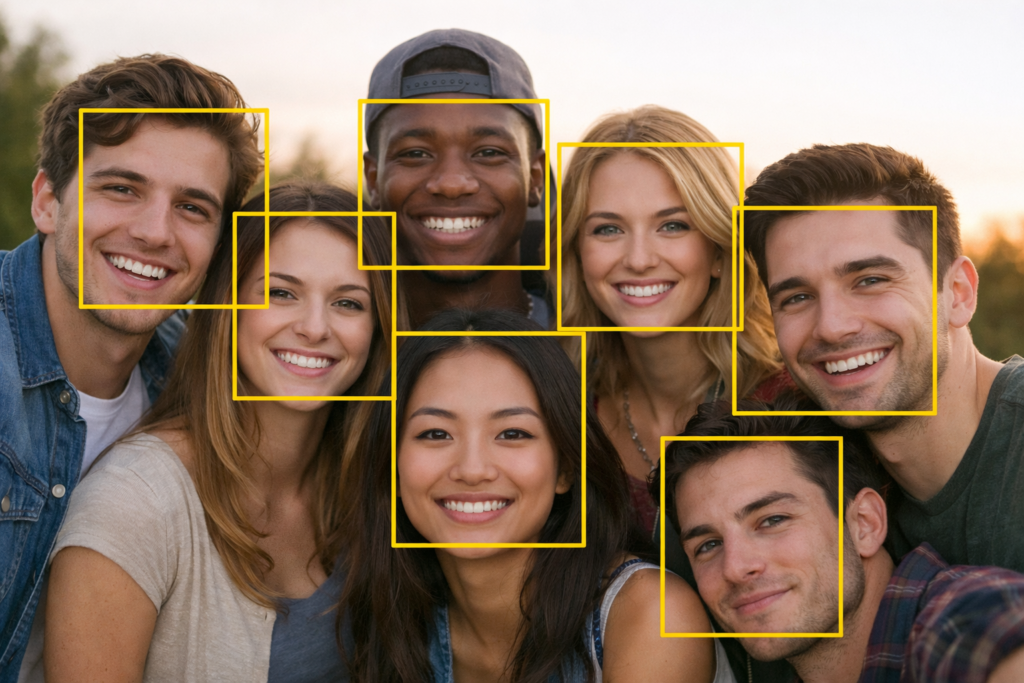

Before recognizing someone, AI must first detect the presence of a face within an image. This process involves scanning an image pixel by pixel to locate facial regions.

Earlier systems used handcrafted algorithms like Haar cascades, but modern systems rely on deep neural networks trained specifically for face detection. These networks identify bounding boxes around faces regardless of lighting conditions, pose variations, or background noise.

Face detection models are trained using large datasets where faces are annotated with coordinates. Once the system detects a face, it crops the region and prepares it for further analysis.

This step is critical because even a slight misalignment can affect recognition accuracy in later stages.

Step 2: Face Alignment – Standardizing the Input

Human faces appear at different angles, expressions, and lighting conditions. A person might tilt their head, smile, or partially block their face. To handle these variations, AI systems perform face alignment.

Face alignment involves detecting key facial landmarks such as the eyes, nose, and mouth. The system then rotates and scales the image so that these landmarks align in a consistent position across all inputs.

This normalization process ensures that the recognition model focuses on meaningful structural features rather than irrelevant distortions caused by pose or camera angle.

Step 3: Feature Extraction – Turning Your Face into Numbers

This is where the real intelligence happens.

Once aligned, the face is passed into a deep neural network that transforms it into a numerical representation called a face embedding. Instead of storing the entire image, the model converts the face into a high-dimensional vector — often 128 to 512 numbers — that uniquely describes facial characteristics.

Think of this embedding as a mathematical fingerprint of your face.

Models such as FaceNet, ArcFace, and MobileFaceNet are commonly used for this purpose. These models are trained using metric learning techniques that ensure faces of the same person are placed close together in vector space, while faces of different people are placed far apart.

For example, when you unlock your phone using facial recognition, your current face embedding is compared against a stored embedding. If the distance between the two vectors is below a certain threshold, access is granted.

Step 4: Face Matching – Measuring Similarity

After converting faces into embeddings, the system compares vectors using similarity metrics such as cosine similarity or Euclidean distance.

If two embeddings are sufficiently similar, the model concludes that they belong to the same person. If not, they are classified as different individuals.

This comparison process is computationally efficient and scalable, which is why large organizations can search through massive facial databases in real time.

Platforms that integrate AI development tools from providers like Microsoft Azure AI or IBM Watson often leverage cloud infrastructure to scale recognition systems for enterprise use.

How AI Learns to Recognize You: The Role of Deep Learning

Deep learning models learn facial features through exposure to vast datasets containing labeled face images. During training:

- The model receives an image.

- It predicts an embedding.

- The system calculates loss based on how close the prediction is to the correct identity.

- The model updates its internal weights using backpropagation.

Over millions of iterations, the network learns to emphasize highly discriminative features and ignore irrelevant variations such as hairstyle, glasses, or minor lighting differences.

The larger and more diverse the dataset, the more accurate the system becomes. However, this introduces serious concerns regarding data collection and consent — topics we will explore shortly.

Where Face Recognition Is Used Today

Face recognition technology is now embedded across multiple industries:

1. Smartphone Authentication

Modern smartphones use face recognition for secure unlocking and payment verification. Unlike passwords, facial biometrics cannot be easily guessed or shared.

2. Airport and Border Control

Many countries deploy automated biometric gates to verify travelers using passport photo comparisons.

3. Law Enforcement

Authorities use facial databases to identify suspects, missing persons, or individuals on watchlists.

4. Banking and Fintech

Banks use facial verification for KYC (Know Your Customer) compliance and fraud prevention.

5. Retail and Marketing

Some retailers experiment with facial analytics to understand customer behavior patterns.

While these applications offer convenience and enhanced security, they also raise ethical and privacy concerns.

Security Implications of Face Recognition Technology

1. Biometric Data Is Permanent

Unlike passwords, you cannot change your face if it is compromised. If a facial database is breached, the consequences are long-lasting.

2. Risk of Data Breaches

Centralized facial databases are attractive targets for cybercriminals. Stolen biometric templates could potentially be misused for identity theft or unauthorized surveillance.

3. Deepfake Attacks and Spoofing

Advanced deepfake technology can create realistic fake faces and videos. Without proper liveness detection, systems can be fooled by high-resolution photos or videos.

4. Mass Surveillance Concerns

Widespread deployment of facial recognition in public spaces raises civil liberty concerns. Critics argue that continuous biometric tracking can erode personal freedoms and anonymity.

5. Algorithmic Bias

Studies have shown that some face recognition systems perform less accurately on certain demographic groups. Bias often stems from imbalanced training datasets.

Privacy Concerns: Who Owns Your Face?

One of the biggest questions surrounding face recognition is consent. Many individuals are unaware that their images may be scraped from public sources to train AI systems.

Data protection regulations like GDPR in Europe attempt to regulate biometric data usage, but enforcement remains challenging.

Users must understand that uploading images online can potentially contribute to AI training ecosystems. Transparency and ethical data governance are becoming increasingly important in AI development.

How Secure Is Face Recognition Compared to Other Authentication Methods?

Face recognition is generally more secure than simple passwords but less secure than multi-factor authentication when used alone.

The strongest security systems combine:

- Face recognition

- Device authentication

- PIN or password

- Behavioral biometrics

Layered security significantly reduces risk.

The Future of Face Recognition AI

Face recognition continues to evolve. Emerging improvements include:

- Better fairness and bias mitigation

- Stronger anti-spoofing mechanisms

- Edge-device processing to reduce data sharing

- Privacy-preserving machine learning techniques

Federated learning and encrypted embeddings may soon allow systems to verify identities without exposing raw biometric data.

As the technology advances, balancing convenience, security, and civil rights will define its long-term success.

Final Thoughts: Power, Precision, and Responsibility

Face recognition AI is no longer science fiction — it is part of everyday life. The same technology that unlocks your phone in milliseconds can also track individuals across cities. It can prevent fraud, enhance security, and streamline identity verification — but it can also challenge the boundaries of privacy and consent.

Understanding how AI learns to recognize you empowers you to make informed decisions about your digital identity. As biometric systems become more widespread, awareness is your first layer of protection.

The future of face recognition will not just be defined by algorithms — but by ethics, regulation, and responsible innovation.

Also Read: The Hidden Power of LLMs: How They Actually Think