Introduction: The Invisible Engine Behind Modern AI

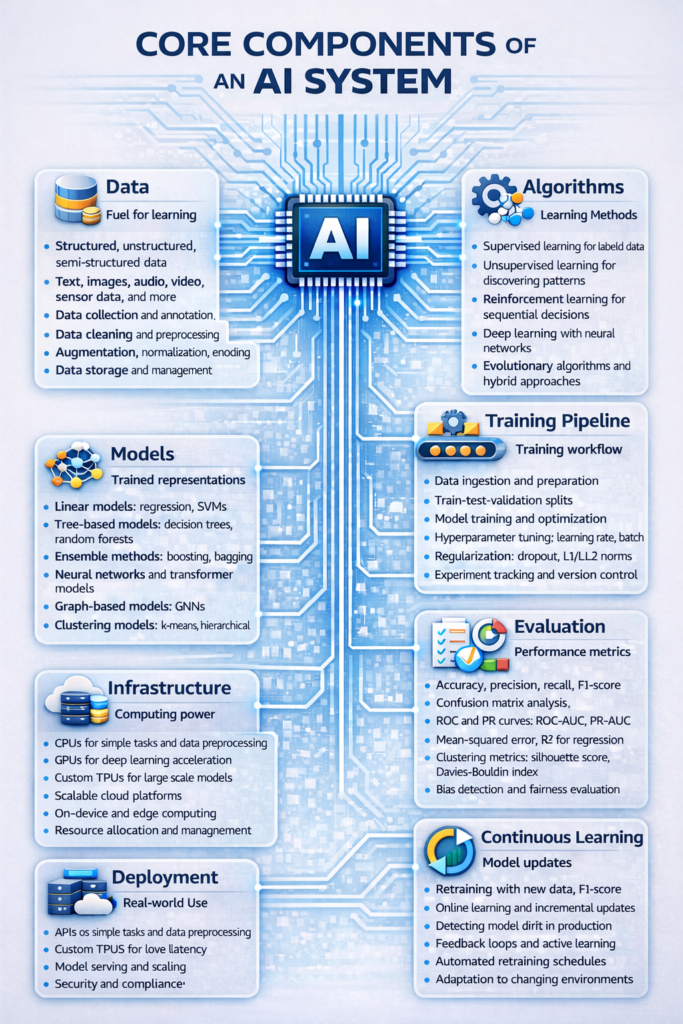

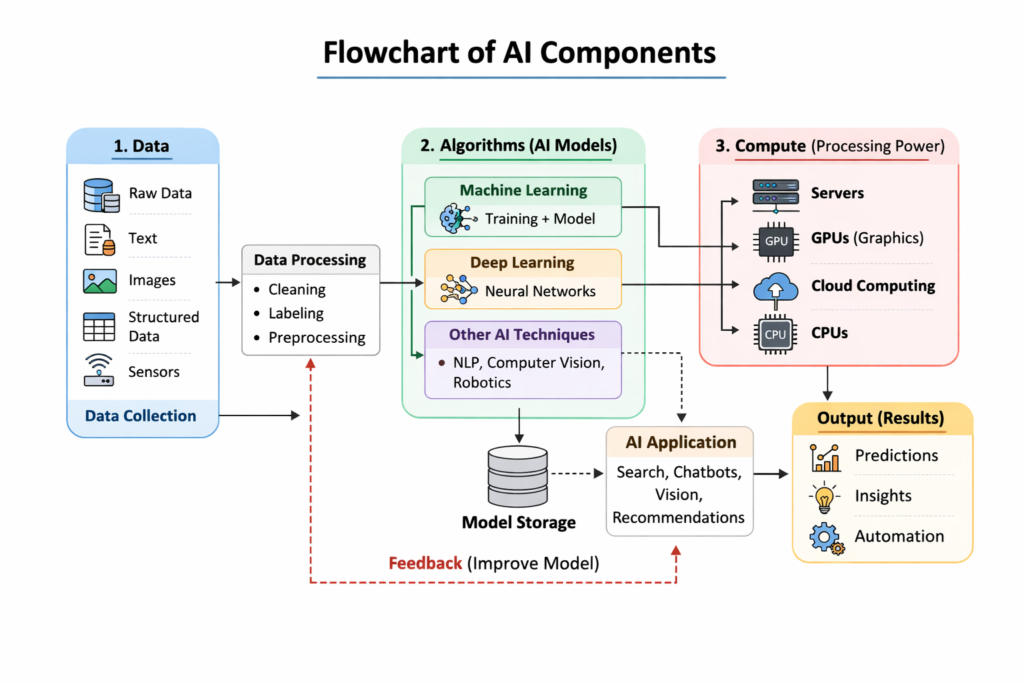

Artificial Intelligence is often talked about as if it works by magic—but behind every intelligent application lies a structured system designed with precision. Whether you’re a student exploring AI fundamentals, a professional building machine-learning solutions, or a tech enthusiast trying to understand what powers tools like recommendation engines, chatbots, predictive analytics, or autonomous systems, knowing the core components of an AI system is essential. These components—data, algorithms, models, infrastructure, learning pipelines, and evaluation frameworks—form the foundation that makes intelligent decision-making possible.

What makes this topic especially compelling is how each component interacts with others: data trains the models, algorithms shape learning, and infrastructure ensures scalability and speed. Grasping these connections helps you understand not just what AI does, but how it truly works under the hood.

Why Understanding AI Components Matters

In an age where AI is integrated into finance, healthcare, entertainment, retail, transportation, and personal productivity tools, understanding its inner workings is no longer optional. Many people can use AI, but far fewer understand its architecture. A complete AI system is more than just a trained model—it is an ecosystem. From raw data collection to training methodologies, continuous monitoring, and real-world deployment, every component impacts the system’s accuracy, reliability, ethics, and performance. This makes the topic highly relevant for learners and professionals wanting clarity beyond superficial definitions.

1. Data: The Fuel That Powers Every AI System

A modern AI system is only as strong as the data behind it. Data forms the backbone of machine learning, providing the context and patterns that models learn from. High-quality, diverse, and representative datasets allow AI systems to generalize well in practical scenarios. Poor data—no matter how advanced the algorithm—results in inaccurate predictions and biased outcomes.

1.1 Types of Data in AI Systems

AI systems rely on several categories of data:

- Structured data: rows and columns, such as customer details, financial records, or sensor logs.

- Unstructured data: text, images, audio, videos—accounts for most real-world information.

- Semi-structured data: logs, XML files, metadata, and user-generated content.

- Real-time data: streaming data from IoT devices, transactions, or live systems.

Longer paragraphs:

Each type of data plays a unique role depending on the AI application. Structured data helps algorithms identify numerical relationships and categorical patterns, making it ideal for forecasting, classification, and optimization tasks. Unstructured data powers advanced deep learning architectures such as CNNs for image recognition or transformers for natural language understanding. Semi-structured data helps systems maintain flexibility between raw content and predefined schema, while real-time data supports applications like fraud detection, autonomous navigation, and stock market prediction. Understanding the nature, quality, distribution, and relevance of data is one of the first steps in building any effective AI pipeline.

1.2 Data Preprocessing and Cleaning

Raw data is rarely ready for direct use. AI engineers must perform cleaning, normalization, augmentation, deal with missing values, and remove noise. These tasks are crucial because they ensure that models learn from accurate, consistent, and unbiased information. Data preprocessing is often the most time-consuming phase, but it dramatically improves model performance and reduces computational waste.

2. Algorithms: The Intelligence Blueprint

Algorithms define how an AI system learns. They are mathematical procedures that convert data into patterns and predictions. Think of algorithms as the instructions that guide the model: they determine how data is processed, how errors are minimized, and how patterns are extracted.

2.1 Categories of AI Algorithms

AI algorithms can be broadly classified into:

- Supervised learning: learns from labeled data.

- Unsupervised learning: finds hidden structures in unlabeled data.

- Reinforcement learning: learns by interacting with an environment.

- Deep learning: neural network-based learning models.

- Evolutionary and hybrid algorithms: optimization-driven methods.

Longer explanation:

Supervised learning is widely used in applications like fraud detection, medical diagnosis, email spam classification, and demand forecasting, where historical examples guide future predictions. Unsupervised learning is essential for clustering, anomaly detection, and recommendation systems—helping identify natural groupings in data without labels. Reinforcement learning powers robotics, gaming, and dynamic systems that require trial-and-error learning. Deep learning, a subset of machine learning, mimics the structure of the human brain through layers of neurons, enabling breakthroughs in vision, speech, and language tasks. Each algorithm type solves different real-world problems and requires distinct computational strategies.

3. Models: The Learned Representation of Intelligence

A model is the outcome of training an algorithm on data. It is the mathematical representation of learned patterns. While algorithms define the learning process, models are what actually make predictions.

3.1 Types of Models

- Linear and logistic regression models

- Decision trees and ensemble models

- Neural networks

- Transformer-based models

- Graph models

- Clustering models

Longer explanation:

Models transform data into actionable insights. For example, regression models highlight relationships between variables and help forecast trends. Decision trees provide rule-based reasoning that is easy to interpret, while ensemble methods combine multiple weak learners to form highly stable and powerful predictors. Neural networks allow systems to classify images, understand speech, and generate human-like text. Transformer models have revolutionized language processing with their attention-based architecture. Graph models help analyze relationships in social networks, biological systems, and recommendation engines. Understanding how models function—and how they differ—helps developers align the right model with the right use case.

4. Training Pipelines: How AI Learns Step by Step

Training an AI system involves encoding data into the model through iterations. A proper training pipeline ensures efficiency, reproducibility, and high accuracy.

4.1 Key Steps in AI Training

- Data ingestion and preprocessing

- Train-validation-test splitting

- Model training and optimization

- Hyperparameter tuning

- Regularization to prevent overfitting

- Checkpointing and version control

Longer explanation:

The training pipeline ensures models evolve through structured experiments rather than guesswork. Data ingestion gathers and formats information. Splitting the dataset ensures generalization by assessing model performance on unseen examples. Hyperparameter tuning discovers the optimal settings for algorithms—like learning rate, depth, or regularization strength. Techniques such as dropout, batch normalization, and L2 regularization prevent models from memorizing data. With proper pipeline management, AI development becomes faster, more stable, and easier to scale.

5. Infrastructure: The Hardware and Software Ecosystem

AI systems rely heavily on infrastructure for storage, computation, and deployment. Without the right infrastructure, even the best algorithms fail to perform efficiently.

5.1 Computing Resources

- CPUs for general computation

- GPUs for parallel processing

- TPUs for deep-learning acceleration

- Scalable cloud platforms

- On-device edge computing

Detailed explanation:

Deep learning models in particular require massive computational power. GPUs accelerate matrix computations needed for training large neural networks. TPUs optimize tensor operations for ultra-large models. Cloud platforms allow researchers and companies to scale resources as needed, reducing costs and improving flexibility. Edge devices enable low-latency AI applications like real-time translation or autonomous driving. Infrastructure determines how fast, how big, and how efficiently AI systems operate.

6. Evaluation and Validation: Measuring AI’s Effectiveness

AI systems must be evaluated rigorously to ensure reliability. Evaluation metrics differ across tasks.

6.1 Evaluation Metrics

- Accuracy, precision, recall, F1-score

- Confusion matrices

- ROC-AUC and PR-AUC curves

- Mean squared errors for regression

- Clustering metrics: silhouette score, Davies–Bouldin index

Longer explanation:

Metrics quantify how well a model performs and help identify weaknesses. In classification tasks, accuracy alone may be misleading, especially with imbalanced data. Precision and recall provide deeper insights into false positives and false negatives, while the F1-score gives a single, balanced metric. ROC and PR curves illustrate the trade-offs across thresholds. For clustering models, where no labels exist, silhouette scores and similar metrics assess how well the data is grouped. Evaluation ensures AI performs reliably before deployment.

7. Deployment: Taking AI from Lab to Real World

Once trained and validated, the model must be deployed. Deployment transforms a static model into a scalable, real-world application.

7.1 Deployment Methods

- APIs and microservices

- Edge deployment

- Cloud-hosted AI platforms

- Embedded systems

- MLOps pipelines for continuous monitoring

Longer explanation:

Deployment requires converting models into production-ready environments, ensuring compatibility, versioning, scalability, and security. MLOps practices help automate deployment, monitor performance drift, retrain models, and track predictions. This stage determines whether AI solutions remain useful and accurate as data changes over time.

8. Continuous Learning: Keeping AI Relevant

AI systems improve over time through retraining and real-time feedback loops. Continuous learning ensures adaptability. Without it, models degrade as the environment changes.

Table: Core Components of An AI System

| Core Component | What It Means | Key Elements | Why It Matters |

|---|---|---|---|

| Data | The foundational input AI learns from. | Structured data, unstructured data, semi-structured data, real-time data, data preprocessing | High-quality data improves accuracy, reduces bias, and ensures better model generalization. |

| Algorithms | Mathematical procedures that determine how learning happens. | Supervised learning, unsupervised learning, reinforcement learning, deep learning, evolutionary algorithms | Algorithms define how data is processed, how errors are minimized, and how patterns are learned. |

| Models | The trained representation built from algorithms and data. | Regression models, decision trees, ensembles, neural networks, transformers, clustering models | Models make predictions, classifications, and decisions AI systems rely on in real applications. |

| Training Pipeline | The end-to-end workflow that prepares data and trains the model. | Data ingestion, splitting, training, hyperparameter tuning, regularization, version control | Ensures systematic, reproducible model training with scalable improvement and reduced errors. |

| Infrastructure | Hardware and software environment that powers AI. | CPUs, GPUs, TPUs, cloud platforms, on-device edge computing | Enables large-scale computation, fast training, and efficient deployment. |

| Evaluation | Methods used to measure and validate AI performance. | Accuracy, precision, recall, F1, ROC-AUC, MSE, silhouette score, clustering metrics | Ensures reliability, fairness, and performance before deployment. |

| Deployment | Delivering the trained model into real-world use. | APIs, microservices, edge deployment, cloud hosting, MLOps | Makes AI accessible to users, scalable in production, and monitored for drifts. |

| Continuous Learning | Updating models to keep them relevant as data changes. | Online learning, periodic retraining, feedback loops | Prevents model decay and keeps AI aligned with changing environments. |

Conclusion

Understanding the core components of an AI system helps demystify how data, algorithms, and models interact to create intelligent behavior. These components form the foundation for every AI application, from predictive analytics to autonomous systems. Whether you’re a beginner or a seasoned practitioner, recognizing how these elements work together equips you to build, evaluate, and deploy reliable AI solutions.