Introduction: How Machines Learn Like Humans Through Rewards and Mistakes

In a world where machines are no longer just tools but decision-makers, a fascinating question arises: how do they learn what is right and what is wrong without being explicitly told? Reinforcement Learning (RL) answers this by mimicking one of the most natural learning processes humans rely on—learning through experience. Think about how a child learns to walk, how a gamer improves with each level, or how we adjust our habits based on outcomes.

Reinforcement Learning operates on the same principle: trial, error, reward, and improvement. This blog explores Reinforcement Learning in a way that connects deeply with real-life experiences, making it intuitive, practical, and meaningful for learners, students, and professionals alike.

What is Reinforcement Learning?

Reinforcement Learning is a type of machine learning where an agent learns to make decisions by interacting with an environment. Instead of being taught with labeled data like in supervised learning, the agent learns by receiving feedback in the form of rewards or penalties based on its actions.

At its core, RL is about answering one fundamental question: “What should I do now to maximize future rewards?” This is not just a computational problem—it mirrors how humans think in uncertain situations.

For example, imagine training a dog. You don’t explain rules in detail. Instead, you reward good behavior (like sitting on command) and discourage bad behavior. Over time, the dog learns the desired behavior through reinforcement. Similarly, an RL model learns by exploring different actions and understanding their consequences.

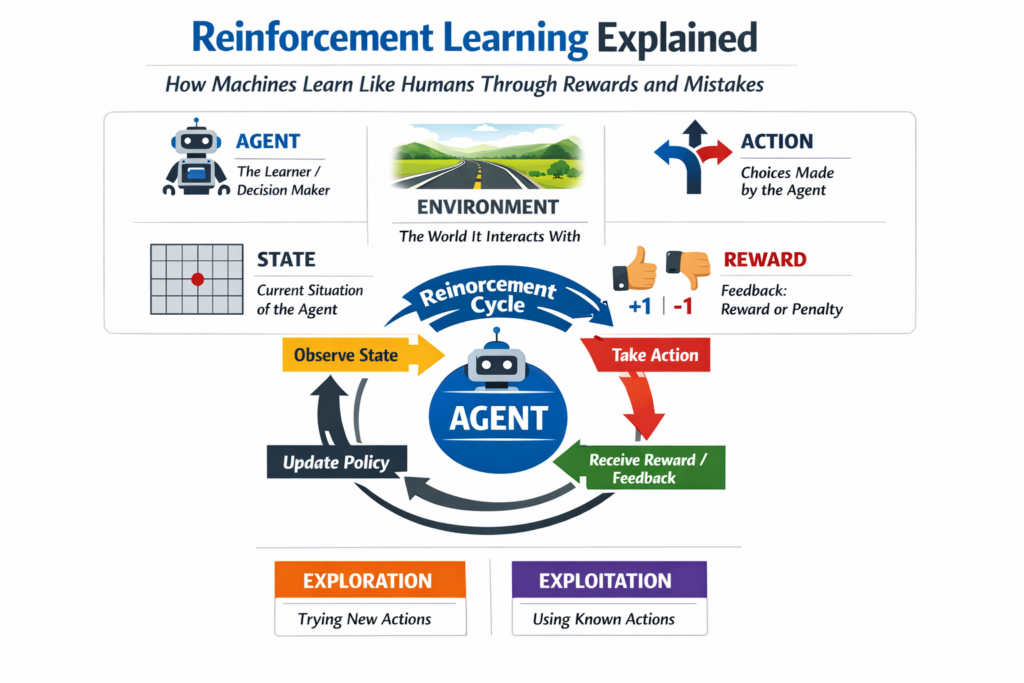

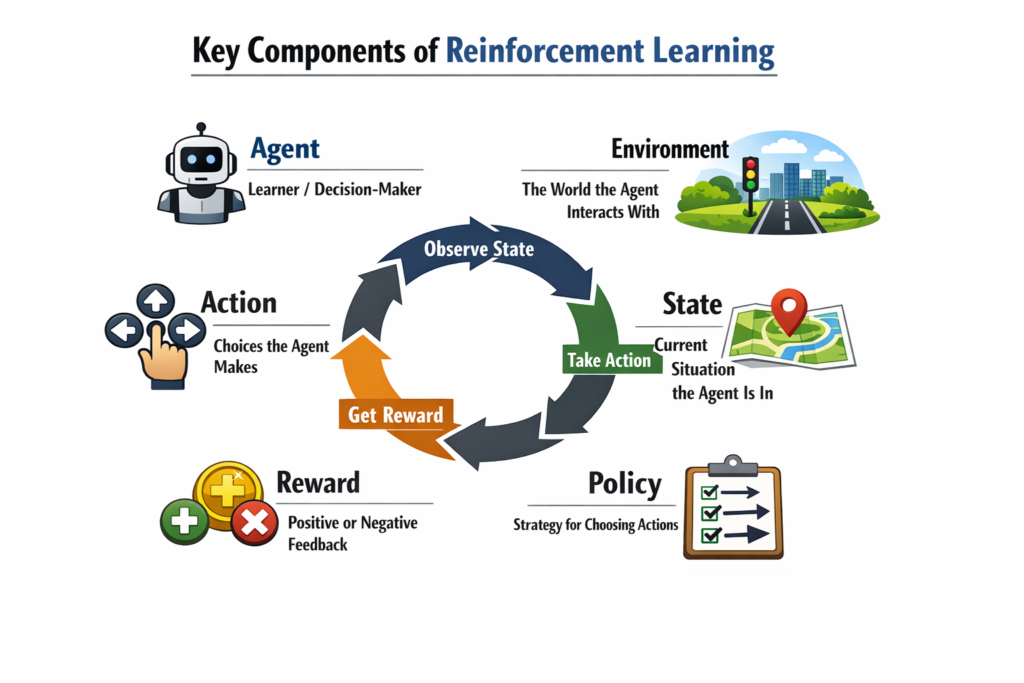

Key Components of Reinforcement Learning

To understand Reinforcement Learning (RL) in a deeper and more practical way, it is important to break it down into its core components. Each of these elements plays a critical role in how an RL system learns, adapts, and improves over time. Together, they form the foundation of decision-making in dynamic environments.

1. Agent

The agent is the central entity in Reinforcement Learning—the learner and decision-maker. It interacts with the environment, takes actions, and learns from the outcomes of those actions. The agent can take many forms depending on the application, such as a robot navigating a room, a software algorithm playing a game, or an AI system optimizing recommendations. Its primary objective is to learn a strategy that maximizes cumulative rewards over time by continuously improving its decisions.

2. Environment

The environment represents everything the agent interacts with. It defines the rules, conditions, and possible scenarios the agent may encounter. Whenever the agent takes an action, the environment responds by changing its state and providing feedback in the form of rewards or penalties. For example, in a game, the environment includes the game board, rules, and opponents. In real-world applications, it could be traffic conditions for a self-driving car or user behavior in a recommendation system.

3. Action

Actions are the set of all possible decisions or moves the agent can make at any given moment. These actions directly influence the state of the environment and determine the kind of feedback the agent will receive. The choice of action is crucial because it impacts both immediate rewards and future outcomes. For instance, in a navigation task, actions could include moving left, right, forward, or backward. The agent must learn which actions are most beneficial in different situations.

4. State

The state refers to the current situation or context of the agent within the environment. It contains all the necessary information required for the agent to make a decision. States can be simple, such as the position of a player in a grid, or highly complex, like sensor data in autonomous driving systems. The agent observes the state and uses it to decide the next best action, making state representation a critical aspect of effective learning.

5. Reward

The reward is the feedback signal that guides the learning process. After the agent takes an action, it receives a reward from the environment, which indicates whether the action was good or bad. Rewards can be positive (encouraging certain behaviors) or negative (discouraging undesirable actions). The ultimate goal of the agent is to maximize the total accumulated reward over time, not just immediate gains. Designing an effective reward system is crucial, as it directly influences how the agent learns.

6. Policy

A policy is the strategy or rule that the agent follows to decide which action to take in a given state. It can be thought of as the agent’s “behavior pattern.” Policies can be simple (rule-based) or complex (learned through neural networks). Over time, the agent refines its policy to improve decision-making and achieve better outcomes. The quality of the policy ultimately determines how well the agent performs in its environment.

How These Components Work Together

All these components are interconnected and operate in a continuous loop. The agent observes the current state of the environment, selects an action based on its policy, and then receives feedback in the form of a reward along with a new state. Using this information, the agent updates its understanding and improves its policy for future decisions.

This iterative cycle—observe, act, learn, and improve—is what enables Reinforcement Learning systems to evolve over time and handle increasingly complex tasks effectively.

Real-Life Analogies to Understand Reinforcement Learning

Learning to Ride a Bicycle

When you first learn to ride a bicycle, no one gives you a detailed instruction manual that guarantees success. Instead, you try, fall, adjust, and try again. Each successful attempt reinforces the correct balance and coordination.

- Falling = Negative reward

- Maintaining balance = Positive reward

- Practice = Exploration

Over time, your brain develops a “policy” that helps you stay balanced effortlessly.

Video Games and Skill Improvement

Consider playing a video game. Initially, you may lose frequently. However, each loss teaches you something—avoid certain moves, time your actions better, or use resources wisely.

- Winning points = Reward

- Losing health/lives = Penalty

- Strategy improvement = Learning

This is exactly how RL algorithms optimize their behavior.

Studying for Exams

Students often adjust their study strategies based on results:

- Good grades = Reinforcement of current method

- Poor grades = Need to change approach

The student acts as the agent, the exam environment provides feedback, and performance determines future strategies.

How Reinforcement Learning Works: Step-by-Step

Reinforcement Learning follows a structured cycle:

- The agent observes the current state of the environment

- It chooses an action based on its policy

- The environment responds with a new state and reward

- The agent updates its knowledge to improve future decisions

This continuous loop allows the agent to gradually learn the best possible strategy.

A critical aspect of RL is balancing:

- Exploration: Trying new actions to discover better outcomes

- Exploitation: Using known actions that yield high rewards

Too much exploration wastes time, while too much exploitation may prevent discovering better solutions.

Types of Reinforcement Learning

1. Model-Free Reinforcement Learning

The agent learns directly from experience without understanding the environment’s internal workings. It focuses on learning the best actions based on rewards.

Example: Learning to play a game by trial and error without knowing the game rules beforehand.

2. Model-Based Reinforcement Learning

Here, the agent builds a model of the environment and uses it to predict outcomes before making decisions.

Example: Planning moves in chess by anticipating future scenarios.

Key Algorithms in Reinforcement Learning

Some widely used RL algorithms include:

- Q-Learning: Learns the value of actions in different states

- Deep Q Networks (DQN): Combines neural networks with Q-learning

- Policy Gradient Methods: Directly optimize the policy

- Actor-Critic Methods: Combine value-based and policy-based approaches

Each algorithm has its own strengths depending on the complexity of the problem.

Applications of Reinforcement Learning in the Real World

Reinforcement Learning is not just theoretical—it powers many modern technologies:

1. Self-Driving Cars

RL helps vehicles make real-time decisions like braking, accelerating, and turning based on road conditions.

2. Robotics

Robots learn tasks such as picking objects or walking through environments.

3. Recommendation Systems

Streaming platforms and e-commerce sites use RL to personalize recommendations.

4. Healthcare

RL is used in treatment planning and optimizing patient care strategies.

5. Finance

Used in algorithmic trading and risk management.

Reinforcement Learning vs Other Machine Learning Types

| Feature | Reinforcement Learning | Supervised Learning | Unsupervised Learning |

|---|---|---|---|

| Learning Style | Trial and error | Learning from labeled data | Finding patterns in unlabeled data |

| Feedback | Reward/Penalty | Explicit correct answers | No direct feedback |

| Goal | Maximize cumulative reward | Minimize prediction error | Discover hidden structure |

| Example | Game playing, robotics | Spam detection | Customer segmentation |

This comparison highlights how RL stands apart by focusing on decision-making over time rather than static predictions.

Advantages of Reinforcement Learning

Reinforcement Learning offers several benefits:

- It learns directly from interaction without needing labeled data

- It adapts to dynamic and complex environments

- It can handle long-term decision-making problems

- It improves continuously with experience

These features make RL particularly powerful in real-world applications where conditions change frequently.

Challenges and Limitations

Despite its potential, RL comes with certain challenges:

1. High Computational Cost

Training RL models can be time-consuming and resource-intensive.

2. Exploration Complexity

Finding the right balance between exploration and exploitation is difficult.

3. Delayed Rewards

Sometimes the reward comes much later, making it harder to learn cause-effect relationships.

4. Real-World Risks

In physical systems like robotics or healthcare, wrong actions can be costly.

Future of Reinforcement Learning

Reinforcement Learning is evolving rapidly, especially with the integration of deep learning. Future advancements are expected in:

- Autonomous systems

- Smart cities

- Personalized AI assistants

- Advanced robotics

As computing power increases and algorithms improve, RL will play a central role in building intelligent systems that can adapt and learn like humans.

Conclusion

Reinforcement Learning is more than just a machine learning technique—it is a reflection of how learning happens in the real world. From riding a bicycle to mastering a game, the principles of trial, error, and reward shape behavior over time. By embedding these principles into machines, RL enables systems to make smarter decisions, adapt to change, and continuously improve.

For students and professionals, understanding RL opens doors to some of the most exciting advancements in artificial intelligence. Whether you are building models, analyzing data, or simply exploring AI concepts, Reinforcement Learning offers a powerful framework for thinking about learning and decision-making.