Introduction: Why Deep Learning Is Reshaping the Future Faster Than You Think

Imagine a world where machines can diagnose diseases, understand human language, drive cars, and even create art. This is not a distant future—it is the present reality powered by deep learning. Over the past decade, deep learning has emerged as one of the most transformative technologies, driving innovations across industries such as healthcare, finance, marketing, and robotics. But for many students and professionals, the term still feels complex and intimidating.

This guide breaks down deep learning into clear, structured concepts—starting from basics and gradually building up to practical applications. Whether you are a beginner exploring artificial intelligence or a professional looking to strengthen your understanding, this article will help you build a solid foundation with clarity and depth.

What Is Deep Learning?

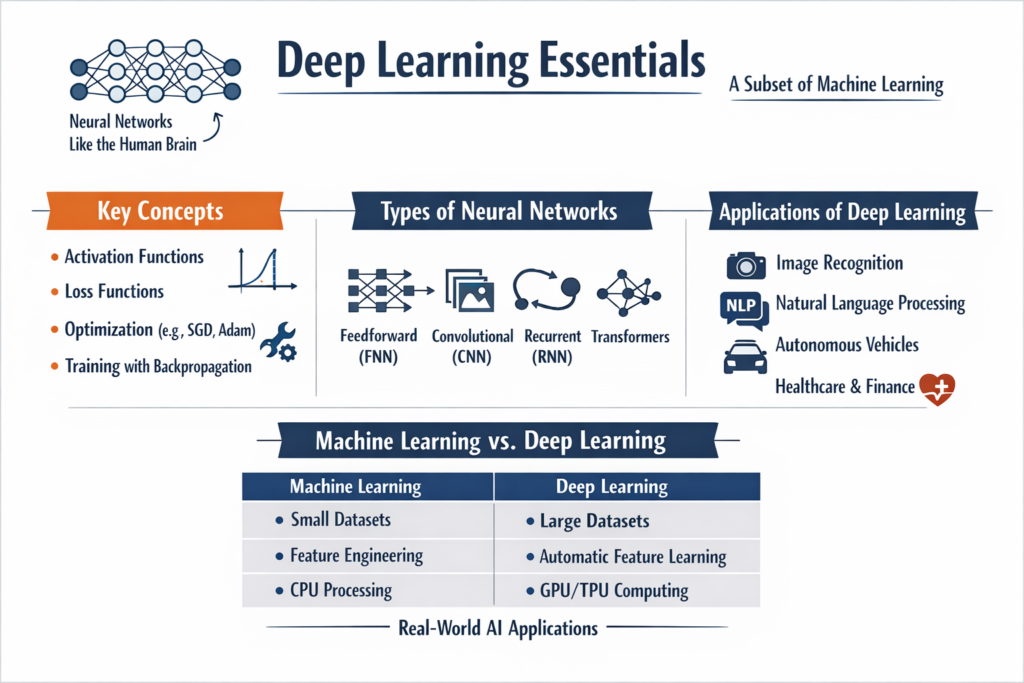

Deep learning is a subset of machine learning that uses artificial neural networks inspired by the human brain to process data and make decisions. Unlike traditional machine learning models that require manual feature extraction, deep learning models automatically learn patterns and representations from raw data.

At its core, deep learning is about learning hierarchical representations. For example, in image recognition, lower layers detect edges, middle layers detect shapes, and higher layers identify objects. This layered learning approach makes deep learning extremely powerful for complex tasks.

Deep learning models require large amounts of data and computational power, often using GPUs or specialized hardware. However, the results are significantly more accurate compared to traditional algorithms when dealing with unstructured data like images, audio, and text.

Understanding Neural Networks: The Foundation of Deep Learning

What Is a Neural Network?

A neural network is a computational model composed of layers of interconnected nodes, also known as neurons. Each neuron receives input, processes it, and passes it to the next layer.

A basic neural network consists of three types of layers:

- Input Layer: Receives raw data

- Hidden Layers: Perform computations and feature extraction

- Output Layer: Produces final predictions

Each connection between neurons has a weight, which determines the importance of the input. The network learns by adjusting these weights during training.

How Neural Networks Work

Neural networks operate using three key steps:

- Forward Propagation: Input data passes through layers to generate predictions

- Loss Calculation: The difference between predicted and actual output is computed

- Backward Propagation (Backpropagation): Weights are updated using gradients to minimize loss

This process is repeated multiple times (epochs) until the model achieves acceptable accuracy.

Key Components of Deep Learning Models

1. Activation Functions

Activation functions introduce non-linearity into the model, allowing it to learn complex patterns. Common activation functions include:

- ReLU (Rectified Linear Unit)

- Sigmoid

- Tanh

Without activation functions, neural networks would behave like simple linear models, limiting their effectiveness.

2. Loss Functions

Loss functions measure how well the model is performing. Examples include:

- Mean Squared Error (for regression)

- Cross-Entropy Loss (for classification)

The goal is to minimize the loss during training.

3. Optimizers

Optimizers adjust model weights to minimize loss. Popular optimizers include:

- Gradient Descent

- Adam Optimizer

- RMSprop

These algorithms determine how quickly and effectively the model learns.

4. Epochs and Batch Size

- Epoch: One complete pass through the dataset

- Batch Size: Number of samples processed at once

Choosing the right combination affects training speed and accuracy.

Types of Neural Networks

1. Feedforward Neural Networks (FNN)

These are the simplest type, where data flows in one direction—from input to output. They are commonly used for basic classification and regression tasks.

2. Convolutional Neural Networks (CNNs)

CNNs are designed for image and spatial data processing. They use convolutional layers to automatically detect features such as edges, textures, and patterns.

Applications include:

- Image classification

- Face recognition

- Medical imaging

3. Recurrent Neural Networks (RNNs)

RNNs are used for sequential data, such as time series or text. They maintain memory of previous inputs, making them suitable for:

- Language modeling

- Speech recognition

- Stock prediction

Variants like LSTM (Long Short-Term Memory) solve the problem of long-term dependencies.

4. Transformer Models

Transformers are the backbone of modern NLP systems. They use attention mechanisms to process entire sequences simultaneously, improving efficiency and performance.

Applications include:

- Chatbots

- Translation systems

- Text summarization

Deep Learning vs Machine Learning: A Clear Comparison

| Feature | Machine Learning | Deep Learning |

|---|---|---|

| Data Requirement | Works with small to medium datasets | Requires large datasets |

| Feature Engineering | Manual | Automatic |

| Performance | Good for structured data | Excellent for unstructured data |

| Training Time | Faster | Slower |

| Interpretability | More interpretable | Less interpretable |

| Hardware Requirement | CPU sufficient | Requires GPU/TPU |

This comparison highlights why deep learning is preferred for complex problems but may not always be necessary for simpler tasks.

Real-World Applications of Deep Learning

1. Healthcare

Deep learning models can detect diseases such as cancer from medical images with high accuracy. They assist doctors in diagnosis, reducing human error and improving treatment outcomes.

2. Natural Language Processing (NLP)

From chatbots to voice assistants, deep learning powers systems that understand and generate human language. Applications include sentiment analysis, translation, and document summarization.

3. Computer Vision

Deep learning enables machines to interpret visual data. It is widely used in:

- Self-driving cars

- Surveillance systems

- Facial recognition

4. Finance

Fraud detection, risk assessment, and algorithmic trading rely heavily on deep learning models that analyze large volumes of financial data in real time.

5. E-commerce and Marketing

Recommendation systems, customer segmentation, and personalized ads are powered by deep learning algorithms that analyze user behavior.

Simple Deep Learning Example in Python

Below is a basic example using Keras to build a neural network for classification:

import tensorflow as tf

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Dense# Create model

model = Sequential([

Dense(16, activation='relu', input_shape=(10,)),

Dense(8, activation='relu'),

Dense(1, activation='sigmoid')

])# Compile model

model.compile(optimizer='adam',

loss='binary_crossentropy',

metrics=['accuracy'])# Dummy data

import numpy as np

X = np.random.rand(100, 10)

y = np.random.randint(2, size=(100, 1))# Train model

model.fit(X, y, epochs=10, batch_size=8)# Evaluate

loss, accuracy = model.evaluate(X, y)

print("Accuracy:", accuracy)

This example demonstrates how easily deep learning models can be built using modern libraries.

Challenges in Deep Learning

Despite its advantages, deep learning has several challenges:

- Data Dependency: Requires large labeled datasets

- Computational Cost: Needs powerful hardware

- Overfitting: Models may memorize data instead of generalizing

- Black Box Nature: Difficult to interpret decisions

Addressing these challenges is an active area of research.

Future of Deep Learning

Deep learning continues to evolve rapidly, with advancements in areas like:

- Explainable AI

- Edge AI (running models on devices)

- Generative AI (creating content like text and images)

- Multimodal learning (combining text, images, and audio)

As technology advances, deep learning will become more accessible and efficient, opening new opportunities across industries.

Conclusion

Deep learning is not just a buzzword—it is a powerful technology shaping the future of artificial intelligence. By understanding neural networks, learning mechanisms, and real-world applications, anyone can begin their journey into this exciting field. While it may seem complex at first, breaking it down into fundamental concepts makes it far more approachable and practical.